There seems to be a cycle that’s taking shape around many modern technological milestones. First, a community (be it a company or a collection of disparate open source teams) create a genuine advancement in science, and then the marketing overhypes it as some kind of magic bullet that will bring salvation to us all. But of course, that salvation never really happens, on account of the technology not really being at the level it’s presented to be (at least not yet) but it doesn’t stop a bunch of fanatics and well-meaning but misinformed members of the public from driving right into the abyss.

Maybe there’s no better example to this discovery-hypermarketing cycle than Web3. On the one hand you’ve got cryptocurrencies and blockchain-based nonfungible tokens with real potential to upset how we use and interact with technology, but on the other you’ve got the fratboys and snake oil salesmen that have proliferated on the internet with their incessant talk about how all of this is headed “to the moon.”

But I don’t want to turn this into another blog post about crypto. What really got me out of bed today is an article from the New York Times about how rather than banning it from the classroom, teachers should be teaching with ChatGPT. One particular paragraph from the article got me doubling over:

It’s easy to understand why educators feel threatened. ChatGPT is a freakishly capable tool that landed in their midst with no warning, and it performs reasonably well across a wide variety of tasks and academic subjects.

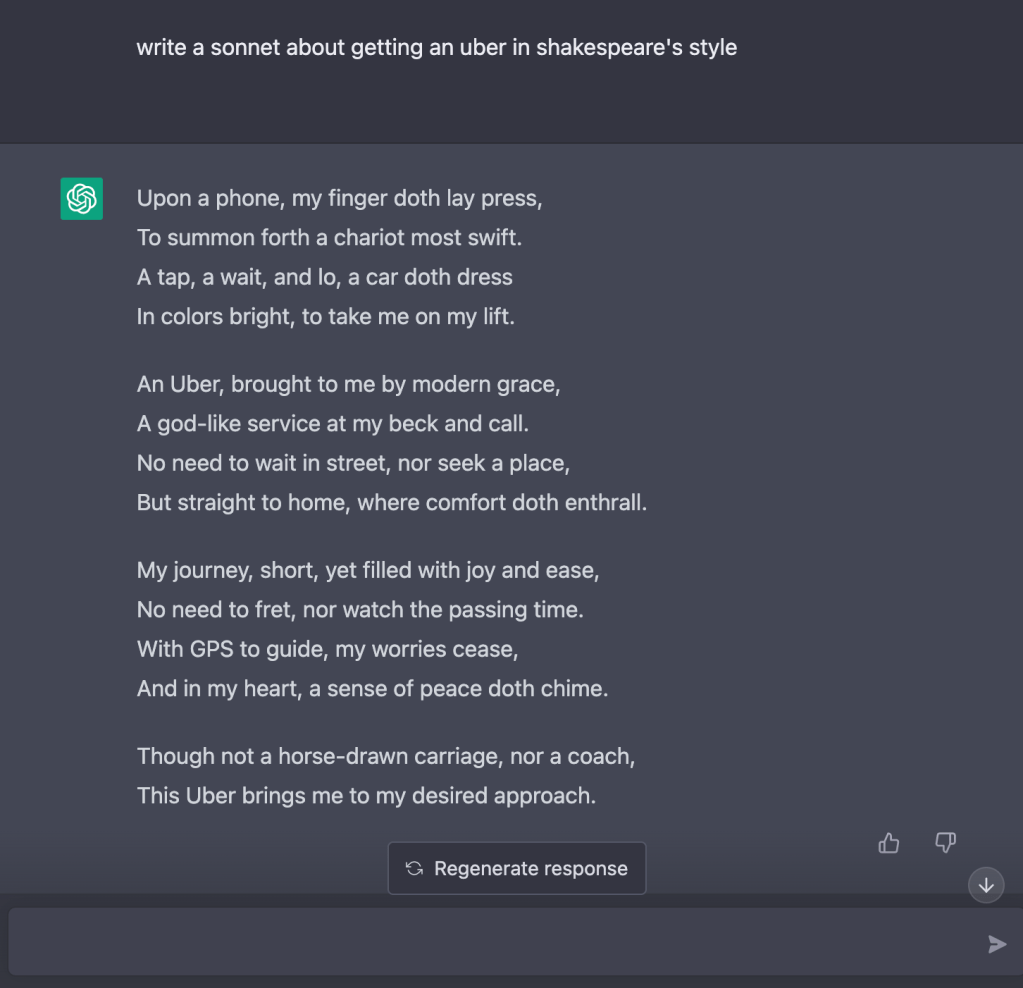

I should probably back up a little bit. ChatGPT, the chatbot based on the GPT-3 (Generative Pre-trained Transformer) model created by non-profit/kind-of-for-profit artificial intelligence venture OpenAI, was released November last year and quickly drew traction on the internet for its detailed and semantically sensible responses. The platform created by OpenAI for the public preview is excellent at making the experience seem surreal: you are given a large text area where you give ChatGPT a question or any open-ended prompt, and the AI responds with very probable, human-sounding grammar.

This is coming at a perfect time with other developments in AI. Two months prior to ChatGPT’s public release, OpenAI also released DALL-E 2 to the wider public: the updated version of their model capable of generating images from natural language prompts. Midjourney, another image generating AI, was released as a public beta in July 2022.

With the case of Midjourney and DALL-E 2, pundits were quick to call an end to the visual arts as a purely human venture, claiming human creativity has finally been hacked. Meanwhile, professionals in the field called out the project for effectively exploiting the work of real, human artists (while the AI generates new images, this capability is trained on a labelled corpus of millions of real art that have been created beforehand).

With ChatGPT, however, people were quick to realize that a model optimized at a question-and-answer activity could be used to generate almost anything: from status updates to full on essays, including software code. At least with the level of hype it’s been getting, this seemingly overpowered tool becomes prone to abuse. This where a lot of the controversy surrounding ChatGPT stems from. Naturally, all sorts of people, from students to full time professionals have tried to augment or outright insource their tasks to the chatbot. After all, if you have access to an AI that can do your homework for you – well, why wouldn’t you use it?

Coming back to the New York Times article, what needs to be clarified is that ChatGPT is not freakishly capable, at least as a knowledge machine. It’s a Large Language Model, which means it wasn’t designed with generating accurate information in mind. Rather, it’s a highly sophisticated pattern matching tool that attempts to predict how a human might write a response to different prompts. In other words, it’s the smarter version of the speaking toys people use on dogs so they can ask for treats using creepy robotic voices.

This essential feature (I wouldn’t call it a bug) in GPT-3’s design is evident in the many elementary flaws it makes. AI researchers Gary Marcus and Ernst Davis have started an open source project documenting these errors, and the examples range from ChatGPT being unable to count to four and failing to follow a simple story comprehension activity. I think even Gary Marcus is being too generous in calling ChatGPT a tool. A calculator is a tool because it’s designed to perform a task with near-100% reliability. Using ChatGPT as a tool for homework or teaching would be like bringing a banana to work expecting it to run spreadsheets.

To wit: Stack Overflow, the massive forum and all-around reference tool for programmers, was quick to ban responses making use of ChatGPT-generated code for the sole purpose that they tend to be wrong, often in ways that a novice would find trouble identifying how and where. Meanwhile, services have popped up offering the (alleged) capability to detect if a given paper is AI-generated or not. (AI trying to catch AI — we really are living in the future!)

I don’t want to devalue the achievement that the people at OpenAI have made with GPT-3 and ChatGPT. As far as AI goes, these models really are the state of the art. They are awesome markers of terrifying speed at which deep learning has progressed over the years, but they are also sobering reminders of very fundamental problems that will need to be addressed in the future. I don’t doubt that students should be taught about AI, if at least to give them a proper sense of what is and isn’t possible at present, and how best to approach these models in the wild. But to suggest that AI augment student learning – at least in their present form – is dangerous, especially given how wrong it can be in very subtle ways.

So how does the hypermarketing cycle end? I think it’s too early to say with confidence that all of these will end in the same way, but I think it’s prudent to take a long and sober look at the very rough year the cryptocurrency space has had. Eventually, the house of cards is doomed to collapse into itself, and a winter takes hold. This is often bad for the researchers and actual scientists who are working passionately around the clock trying to advance the field, but I think it’s a necessary evil for the rest of humanity. How do we learn not to create theme parks full of man eating dinosaurs? Sometimes, the dinosaurs just have to be let out.

Leave a comment